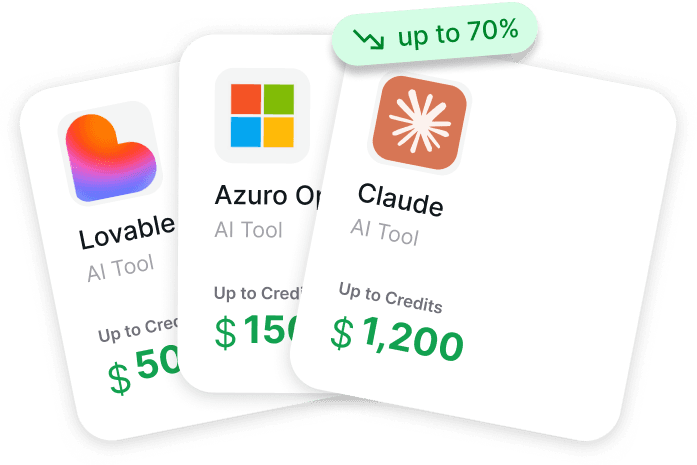

AI Perks curates and provides access to exclusive discounts, credits, and deals on AI tools, cloud services, and APIs to help startups and developers save money.

Groq Free Tier 2026: The Fastest Free LLM API on the Planet

Groq's free tier in 2026 provides 30,000 tokens per minute and 14,400 requests per day on a curated model lineup including Llama 3.1 8B, Llama 4 Scout, Qwen3 32B, and DeepSeek R1 Distill. No credit card required. Sub-second response times via Groq's custom LPU silicon.

For applications where inference speed matters more than absolute model quality (real-time chat, voice interfaces, search, classification), Groq's free tier is hard to beat. The catch: model lineup is curated, not frontier. Combine with free Claude or GPT credits from AI Perks for premium fallback.

Top AI Credits for Startups

Apply directly through these verified programs.

Claude $10,000 credits

Eligible for early-stage startups

OpenAI $2,500 credits

Eligible for early-stage startups

Anthropic $25,000 credits

Eligible for early-stage startups

AWS $300,000 credits

Eligible for early-stage startups

Google Cloud $350,000 credits

Eligible for early-stage startups

Lovable $6,000 credits

Eligible for early-stage startups

What Groq Actually Is

Groq is not a model maker - it is an inference provider running custom LPU (Language Processing Unit) silicon optimized for LLM inference:

- Hardware: Custom LPU chips, not Nvidia GPUs

- Speed: 500-3,000+ tokens/sec output (vs Nvidia 30-100)

- Latency: Sub-second first-token response

- Models: Open-source models (Llama, Qwen, DeepSeek, Mixtral)

- API: OpenAI-compatible

For real-time and high-throughput workloads, Groq is the speed champion in 2026.

Groq Free Tier Limits in Detail

| Model | TPM Limit | RPM Limit | RPD Limit |

|---|---|---|---|

| Llama 3.1 8B | 30,000 TPM | 30 RPM | 14,400 RPD |

| Llama 4 Scout | 30,000 TPM | 30 RPM | 14,400 RPD |

| Qwen3 32B | 30,000 TPM | 30 RPM | 14,400 RPD |

| DeepSeek R1 Distill | 30,000 TPM | 30 RPM | 14,400 RPD |

| Mixtral 8x7B | 30,000 TPM | 30 RPM | 14,400 RPD |

TPM (Tokens Per Minute): 30,000 input + output combined RPM (Requests Per Minute): 30 requests/minute RPD (Requests Per Day): 14,400 requests/day

For most personal projects and prototypes, these limits are generous enough to never hit.

Top AI Credits for Startups

Apply directly through these verified programs.

Claude $10,000 credits

Eligible for early-stage startups

OpenAI $2,500 credits

Eligible for early-stage startups

Anthropic $25,000 credits

Eligible for early-stage startups

AWS $300,000 credits

Eligible for early-stage startups

Google Cloud $350,000 credits

Eligible for early-stage startups

Lovable $6,000 credits

Eligible for early-stage startups

Groq Paid Tier Pricing (When You Outgrow Free)

| Model | Input/1M | Output/1M |

|---|---|---|

| Llama 4 Scout | $0.50 | $1.50 |

| Llama 3.1 70B | $0.59 | $0.79 |

| Llama 3.1 405B | $1.79 | $1.79 |

| Mixtral 8x22B | $2.50 | $2.50 |

Paid Groq is competitive with DeepSeek pricing but with dramatically faster inference. For real-time workloads, the speed premium pays for itself.

What Groq Free Tier Is Best For

Speed-Critical Use Cases

- Real-time chat - sub-second response feels instant

- Voice interfaces - low latency enables natural conversation

- Live transcription with AI editing

- Streaming search with AI ranking

High-Throughput Use Cases

- Bulk classification - 14,400 requests/day is enough for most tasks

- Embedding-style retrieval ranking (with appropriate models)

- Content moderation at moderate scale

- Quick summarization of news feeds

Cost-Sensitive Prototyping

- Hackathon projects - free tier covers the weekend

- Personal projects - no credit card barrier

- Educational projects - students can build without payment

Top AI Credits for Startups

Apply directly through these verified programs.

Claude $10,000 credits

Eligible for early-stage startups

OpenAI $2,500 credits

Eligible for early-stage startups

Anthropic $25,000 credits

Eligible for early-stage startups

AWS $300,000 credits

Eligible for early-stage startups

Google Cloud $350,000 credits

Eligible for early-stage startups

Lovable $6,000 credits

Eligible for early-stage startups

How to Get Started with Groq Free

Step 1: Sign up at console.groq.com with email - no credit card.

Step 2: Generate an API key from the console.

Step 3: Use OpenAI-compatible SDK with Groq endpoint:

from openai import OpenAI

client = OpenAI(

api_key="gsk_...",

base_url="https://api.groq.com/openai/v1"

)

response = client.chat.completions.create(

model="llama-4-scout",

messages=[{"role": "user", "content": "Hello"}]

)

Step 4: Monitor usage in the Groq console dashboard.

Step 5: Get free credits for premium fallback via AI Perks for Claude, GPT when Groq quality is insufficient.

Groq Free Tier vs Cerebras vs Together AI

The three biggest free inference providers in 2026:

| Provider | Free Tier | Speed | Models |

|---|---|---|---|

| Groq | 30K TPM, 14,400 RPD | 500-3,000 tok/s | Llama, Qwen, DeepSeek, Mixtral |

| Cerebras | 1M tokens/day | 2,600 tok/s | Llama 4 Scout, Qwen3 |

| Together AI | Limited free | 50-200 tok/s | 100+ models |

Groq wins on speed. Cerebras gives more daily tokens. Together AI has the broadest model selection. Most developers use Groq as primary with Together AI for model variety.

Top AI Credits for Startups

Apply directly through these verified programs.

Claude $10,000 credits

Eligible for early-stage startups

OpenAI $2,500 credits

Eligible for early-stage startups

Anthropic $25,000 credits

Eligible for early-stage startups

AWS $300,000 credits

Eligible for early-stage startups

Google Cloud $350,000 credits

Eligible for early-stage startups

Lovable $6,000 credits

Eligible for early-stage startups

Stacking Groq With Premium Free Credits

The smart 2026 stack uses Groq for speed-critical inference and Claude/GPT for quality-critical tasks:

Hybrid Stack

- Groq free tier for chat front-end speed: $0

- Free Anthropic credits for hard reasoning: $1,000-$25,000+

- Free OpenAI credits for tool-use agents: $500-$50,000+

- Total: $1,500-$75,000+ in stacked credits

Route by use case: Groq for "feel-instant" tasks, Claude/GPT for "must-be-right" tasks.

How to Get Free Credits Across Providers

| Source | Available Credits | How to Get |

|---|---|---|

| Groq free tier (forever) | 30K TPM, 14,400 RPD | Direct signup |

| Free Anthropic credits | $1,000 - $25,000+ | AI Perks Guide |

| Free OpenAI credits | $500 - $50,000+ | AI Perks Guide |

| Free Gemini credits | $300 - $1,000 | AI Perks Guide |

| Bundled cloud founder programs | $5,000 - $100,000+ | AI Perks Guide |

Total potential: $7,000 - $200,000+ in stacked credits with Groq's free tier as foundation

The exact program names and application order are inside AI Perks. The AI Perks team comes from Y Combinator, Techstars, Antler, 500 Global, and Google for Startups.

Top AI Credits for Startups

Apply directly through these verified programs.

Claude $10,000 credits

Eligible for early-stage startups

OpenAI $2,500 credits

Eligible for early-stage startups

Anthropic $25,000 credits

Eligible for early-stage startups

AWS $300,000 credits

Eligible for early-stage startups

Google Cloud $350,000 credits

Eligible for early-stage startups

Lovable $6,000 credits

Eligible for early-stage startups

Honest Limitations

Groq Cannot Do

- Match Claude Opus 4.7 or GPT-5.5 quality on hardest reasoning

- Long context - max 128K on most models (vs 200K+ on frontier)

- Vision tasks - text-only inference

- Custom fine-tuning - hosted only

- Native tool use at frontier reliability

Where Groq Wins

- Speed - 5-30x faster than any frontier provider

- Cost - paid tier is competitive with DeepSeek

- Free tier - 30K TPM is generous

- Open models - no vendor lock-in to a specific lab

Step-by-Step: Build a Speed-First App with Groq

Step 1: Get free credits via AI Perks for premium fallback (Claude, GPT).

Step 2: Sign up at console.groq.com and grab API key.

Step 3: Route 80% of inference to Groq for speed.

Step 4: Route hard tasks (reasoning, tool use, vision) to Claude or GPT via free credits.

Step 5: Monitor Groq usage - if hitting 14,400 RPD, upgrade to paid or split traffic.

Top AI Credits for Startups

Apply directly through these verified programs.

Claude $10,000 credits

Eligible for early-stage startups

OpenAI $2,500 credits

Eligible for early-stage startups

Anthropic $25,000 credits

Eligible for early-stage startups

AWS $300,000 credits

Eligible for early-stage startups

Google Cloud $350,000 credits

Eligible for early-stage startups

Lovable $6,000 credits

Eligible for early-stage startups

Frequently Asked Questions

Is Groq really free?

Yes, Groq's free tier (30,000 tokens/minute, 14,400 requests/day) requires no credit card. The free tier is permanent and covers most personal projects. For production scale, paid tier or stack with credits from AI Perks.

How fast is Groq?

Groq runs at 500-3,000+ tokens/second output, 5-30x faster than typical GPU-based inference. First-token latency is sub-second. For real-time applications, no other provider matches this speed.

What models does Groq support?

Groq supports open-source models: Llama 3.1 8B, Llama 3.1 70B, Llama 3.1 405B, Llama 4 Scout, Qwen3 32B, Mixtral 8x7B, Mixtral 8x22B, and DeepSeek R1 Distill. No frontier proprietary models.

Can Groq replace Claude or GPT?

For speed-critical tasks where Llama or Qwen quality is sufficient, yes. For hardest reasoning, tool use, or vision, no - use Claude or GPT via free credits from AI Perks.

Groq vs Cerebras for free inference?

Groq gives 30K TPM with stricter daily caps. Cerebras gives 1M tokens/day with longer daily runway. Groq is faster per token. Cerebras is more generous in volume. Use both for different workloads.

Does Groq have a startup program?

Groq does not advertise a standalone startup credit program but is bundled inside some accelerator perks. Combined with cross-provider credits at AI Perks, you can run heavy Groq paid usage at $0 effective cost.

Is Groq production-ready?

Yes for speed-critical and cost-sensitive workloads. For hardest reasoning, pair with Claude or GPT via free credits at AI Perks. Many production apps use Groq as primary with frontier as fallback.

The Bottom Line on Groq Free Tier

Groq is the speed champion of free LLM inference in 2026. 30K TPM free forever, sub-second latency, open-model lineup. Combined with free Claude and GPT credits from AI Perks for premium fallback, you have a complete speed-and-quality stack at $0 cost.

Stop paying for inference speed. Get $7,000-$200,000+ in stacked credits at getaiperks.com.