Quick Summary: Databricks pricing uses a consumption-based model combining Databricks Units (DBUs) charged per workload type with underlying cloud infrastructure costs from AWS, Azure, or GCP. DBU rates vary by subscription tier (Standard, Premium, Enterprise) and compute type, with Jobs compute starting around $0.15/DBU and All-Purpose compute costing 2-3x more. Total monthly costs depend on workload volume, cluster configuration, and optimization practices.

Databricks pricing confuses almost everyone. Ask any engineering lead or CFO one simple question—”How much will Databricks cost us?”—and the answer is almost always some version of “It depends.”

And that’s actually true. The platform operates on a dual-cost structure: Databricks Units (DBUs) for compute workloads plus infrastructure charges from whichever cloud provider powers the platform. What makes this particularly challenging is that DBU rates fluctuate based on subscription tier, workload type, and cloud region.

But here’s the thing—once the framework clicks, Databricks pricing becomes predictable. This guide breaks down exactly how costs accumulate, what drives DBU consumption, and where optimization actually moves the needle.

What Is Databricks?

Databricks is a cloud-based platform for big data analytics, data engineering, and collaborative machine learning. Built on Apache Spark, it integrates with major cloud providers—AWS, Azure, and Google Cloud Platform—offering a unified environment for working with Delta Lake and other open-source technologies.

The platform positions itself as a “lakehouse” solution, combining data warehouse structure with data lake flexibility. Teams use Databricks for ETL pipelines, real-time analytics, machine learning model development, and production AI deployments.

What sets Databricks apart architecturally is the separation between compute and storage. Data lives in cloud storage (S3 on AWS, Blob Storage on Azure, Cloud Storage on GCP) while compute clusters process workloads on-demand. This separation means costs scale independently—storage grows linearly while compute charges only apply when clusters run.

Understanding the Databricks Pricing Model

According to the official website, Databricks offers a pay-as-you-go approach with no up-front costs. Charges accrue at per-second granularity, meaning a cluster running for 10 minutes generates exactly 10 minutes of charges—not a full hour.

The pricing model consists of two components:

- DBU charges: Databricks Units measure normalized compute capacity across different instance types and workload patterns

- Cloud infrastructure costs: Hourly rates for virtual machines, storage, and networking from AWS, Azure, or GCP

These charges stack. Running an m5.xlarge instance on AWS incurs both the DBU rate (0.690 DBU per hour for certain workloads) and the infrastructure cost ($0.3795 per hour for the VM itself).

Real talk: this dual structure catches teams off guard. Engineering focuses on cluster sizing and VM selection while finance sees unexpectedly high bills because DBU multipliers weren’t factored into projections.

What Are Databricks Units (DBUs)?

DBUs represent a unit of processing capability. Databricks charges different DBU rates depending on:

- Workload type: Jobs compute, All-Purpose compute, SQL warehouses, serverless, and model serving each carry different rates

- Subscription tier: Standard, Premium, and Enterprise tiers price DBUs differently

- Instance configuration: Larger instances with more vCPUs and memory consume more DBUs per hour

The number of DBUs consumed per hour depends on instance specifications. According to available data, an m5.xlarge instance (4 vCPUs, 16 GB memory) has a DBU rate of 0.690 for certain compute types.

So if that instance runs for one hour on Jobs compute at the Standard tier, the calculation looks like this:

- DBU consumption: 0.690 DBU

- DBU price (example): $0.15 per DBU

- DBU cost: 0.690 × $0.15 = $0.1035

- Infrastructure cost: $0.3795

- Total hourly cost: $0.483

But wait. Switch that same cluster to All-Purpose compute and the DBU price jumps significantly—often 2-3x higher—because interactive workloads include notebook environments and collaboration features.

Databricks Subscription Tiers Explained

Databricks offers three primary subscription tiers, each with different DBU pricing and feature sets. These tiers determine not just cost but also access to governance, security, and collaboration capabilities.

Standard Tier

The entry-level tier provides core Databricks functionality without advanced enterprise features. Standard tier works for teams focused purely on data processing without complex governance requirements.

On Azure, Standard tier Jobs compute costs $0.15 per DBU (US East region data). This represents the baseline DBU rate before multipliers for other compute types or tiers.

Standard tier lacks role-based access control (RBAC), audit logging, and advanced security features—acceptable for development environments but limiting for production workloads handling sensitive data.

Premium Tier (Enterprise on AWS/GCP)

Premium adds capabilities designed for scaling teams and operational efficiency. Key features include:

- Role-Based Access Control (RBAC) for granular permissions

- Audit logs tracking access and actions across workspaces

- Enhanced security and compliance controls

- Collaborative notebooks with versioning

DBU rates increase at Premium tier compared to Standard. The exact multiplier varies by workload type, but Premium tier costs more per DBU than Standard (exact multiplier varies by workload type).

On Azure, the Premium tier corresponds to what AWS and GCP call the Enterprise tier—important when comparing cross-cloud pricing.

Enterprise Tier

Enterprise tier delivers maximum governance, compliance, and support for large-scale production deployments. Additional features beyond Premium include:

- Advanced data governance and lineage tracking

- Unity Catalog for centralized metadata management

- Enhanced performance optimizations

- Priority support and SLA commitments

Enterprise represents the highest DBU pricing tier. Teams handling regulated data or requiring sophisticated access controls typically operate at this level despite the cost premium.

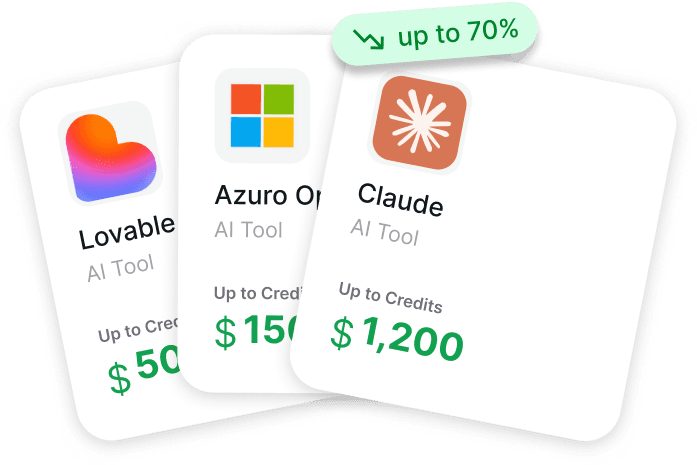

Don’t Overpay for Data Tools Upfront

Looking into pricing for Databricks? The challenge is rarely just one tool – costs add up across compute, storage, and supporting AI tools.

Get AI Perks helps reduce that overall spend before you commit. It aggregates credits, discounts, and partner offers across AI, cloud, and developer tools, so you can access deals that are usually scattered across different programs.

With Get AI Perks, you can:

- access credits for AI and data infrastructure tools

- reduce total cost across your stack

- test tools before committing to full pricing

If you’re comparing Databricks pricing, start by lowering your total costs – check Get AI Perks.

Databricks Compute Types and Pricing

Compute type selection drives significant cost variation. Each workload pattern has different pricing optimized for its use case.

Jobs Compute

Jobs compute powers automated, production ETL workflows and scheduled tasks. These clusters start, execute workloads, and terminate automatically.

Pricing advantage: Lowest DBU rates (30-50% less than All-Purpose). Starting at $0.15 per DBU on Standard tier (Azure US East), Jobs compute offers the most economical option for predictable workloads.

Teams running regular data pipelines should default to Jobs compute. The cost savings compound rapidly at scale—running the same workload on All-Purpose compute can cost 2-3x more with zero functional benefit.

All-Purpose Compute

All-Purpose clusters support interactive analytics, notebook development, and collaborative exploration. These clusters persist while users actively work, enabling real-time query execution and iterative development.

The tradeoff: significantly higher DBU rates. All-Purpose compute includes notebook environments, collaboration features, and interactive capabilities that justify the premium pricing.

Common mistake: leaving All-Purpose clusters running idle. Unlike Jobs compute that terminates after task completion, All-Purpose clusters continue accruing charges until manually stopped or auto-terminated. Setting aggressive auto-termination (5-10 minutes of inactivity) prevents runaway costs.

SQL Warehouses

SQL warehouses (formerly SQL endpoints) handle BI queries and analytics workloads. Three types exist:

- Serverless: Fastest startup, highest performance, managed infrastructure

- Pro: Photon acceleration, Predictive IO optimization

- Classic: Basic SQL capabilities, lower cost

Serverless SQL warehouses offer superior performance with Photon Engine, Predictive IO, and Intelligent Workload Management—but at premium DBU rates. Pro warehouses provide Photon and Predictive IO without full serverless infrastructure. Classic warehouses deliver basic functionality at reduced cost.

For BI teams running frequent ad-hoc queries, Serverless performance improvements often justify the cost through faster query execution (fewer DBU-hours total despite higher DBU rates).

Model Serving

Model Serving deploys machine learning models as real-time APIs. Pricing depends on whether deployments use CPU or GPU instances.

According to official pricing data, GPU serving DBU rates vary by instance size:

| Instance Size | GPU Configuration | DBUs per Hour |

|---|---|---|

| Small | T4 or equivalent | 10.48 |

| Medium | A10G × 1 GPU | 20.00 |

| Medium 4X | A10G × 4 GPU | 112.00 |

| Medium 8X | A10G × 8 GPU | 290.80 |

| Large 8X 40GB | A100 40GB × 8 GPU | 538.40 |

| Large 8X 80GB | A100 80GB × 8 GPU | 628.00 |

GPU serving carries substantially higher DBU consumption than standard compute. Teams deploying ML models need accurate traffic projections—underestimating query volume leads to severe cost overruns at these DBU rates.

Serverless Compute

Serverless compute eliminates cluster management entirely. Databricks handles infrastructure provisioning, scaling, and optimization automatically.

Pricing advantage: approximately 50% of Jobs Compute DBU rates for equivalent workloads, according to available data. The reduction reflects infrastructure efficiency gains from shared, optimized resources.

The catch: serverless requires workspace-level enablement and isn’t available in all regions. For supported workloads, serverless often delivers the lowest total cost through reduced DBU rates and zero management overhead.

Databricks Pricing Across Cloud Providers

Databricks runs on AWS, Azure, and Google Cloud Platform with cloud-specific integrations and pricing variations. The core DBU framework remains consistent, but infrastructure costs and regional availability differ.

Databricks Pricing on AWS

AWS Databricks integrates with S3 for storage, EC2 for compute, and IAM for security. Infrastructure charges follow standard AWS EC2 pricing for selected instance types.

For example, an m5.xlarge instance costs $0.3795 per hour in US East regions (on-demand pricing). Add the DBU multiplier based on workload type and subscription tier to calculate total cost.

AWS offers Savings Plans and Reserved Instances for EC2 infrastructure, potentially reducing VM costs by 30-70%. However, these commitments apply only to infrastructure—not DBU charges.

Databricks Pricing on Azure

Azure Databricks exists as a first-party service on Microsoft Azure, offering unified billing and support directly from Microsoft. The Premium tier on Azure corresponds to Enterprise tier on AWS and GCP.

According to official sources, Azure Databricks Standard tier Jobs compute costs $0.15 per DBU in US East region. Infrastructure costs follow Azure VM pricing for selected instance families.

Azure provides unique advantages for organizations already committed to Microsoft ecosystems—unified billing consolidates Databricks charges with other Azure services, and integration with Azure Active Directory simplifies identity management.

Databricks Pricing on Google Cloud Platform

GCP Databricks integrates with Cloud Storage, Compute Engine, and GCP IAM. The platform follows the same DBU framework but leverages GCP’s instance types and regional infrastructure.

GCP typically offers slightly different instance configurations than AWS or Azure, affecting both infrastructure costs and DBU rates. Teams should validate pricing using the Databricks pricing calculator for specific GCP regions.

Cross-Cloud Pricing Comparison

DBU rates remain relatively consistent across clouds for equivalent tiers and compute types. The primary cost variation comes from infrastructure pricing differences between AWS, Azure, and GCP.

Generally speaking, teams should choose cloud providers based on:

- Existing infrastructure commitments and enterprise agreements

- Data locality requirements and compliance needs

- Native service integrations (S3 vs Blob Storage vs Cloud Storage)

- Regional availability for required Databricks features

Cloud provider selection impacts infrastructure costs more than DBU charges. An organization with existing AWS Reserved Instances or Azure commitments can leverage those for significant infrastructure savings.

Using the Databricks Pricing Calculator

The official Databricks pricing calculator helps estimate monthly costs based on workload specifications. Located at the official pricing page, the calculator requires inputs like:

- Cloud provider (AWS, Azure, or GCP)

- Region selection

- Subscription tier (Standard, Premium, Enterprise)

- Compute type (Jobs, All-Purpose, SQL, Serverless)

- Instance type and cluster size

- Expected runtime hours per month

The calculator outputs estimated DBU consumption and total monthly costs combining DBU charges with infrastructure fees.

Now, this is where it gets interesting. The calculator provides estimates—actual costs depend on real usage patterns. Teams frequently underestimate:

- Cluster idle time before auto-termination kicks in

- Development and testing workload volume

- Spillover from interactive development to production clusters

Best practice: run pilot workloads and monitor actual billable usage through system tables before committing to large-scale deployments. The billable usage system table (system.billing.usage) provides granular consumption data for cost analysis.

What Drives Databricks Costs?

Understanding cost drivers helps target optimization efforts effectively. Several factors compound to determine monthly spend.

Data Volume and Workload Velocity

More data requires more compute to process. Batch jobs processing terabytes daily consume significantly more DBU-hours than pipelines handling gigabytes.

Velocity matters too. Real-time streaming workloads require always-on clusters, accumulating charges continuously. Batch processing runs clusters only during active windows, reducing total runtime.

Cluster Configuration and Instance Selection

Larger instances with more vCPUs and memory carry higher DBU rates and infrastructure costs. An m5.8xlarge (32 vCPUs, 128 GB) costs substantially more per hour than an m5.xlarge (4 vCPUs, 16 GB).

The optimization challenge: oversized clusters waste money through unnecessary capacity, while undersized clusters run longer to complete workloads—potentially costing more in total DBU-hours.

Workload Type Distribution

The mix of compute types determines average DBU rates. Organizations running primarily Jobs compute pay less than those heavily utilizing All-Purpose clusters.

Engineering workloads (ETL) typically cost the least, while data science workloads (ML development) can cost 3-4x more due to All-Purpose cluster usage due to All-Purpose cluster usage and longer experimentation cycles.

Cluster Idle Time and Auto-Termination

All-Purpose clusters continue accruing charges while idle unless auto-termination settings stop them. A cluster left running overnight accrues 8-12 hours of unnecessary charges.

Setting auto-termination to 5-10 minutes for development clusters prevents runaway costs. Production Jobs clusters should terminate immediately after task completion.

Storage Costs

While storage costs less per GB than compute, large data lakes accumulate significant monthly charges. Cloud storage pricing varies:

- AWS S3 Standard storage pricing starts at $0.023 per GB for the first 50 TB/month in most regions, but is $0.021 per GB in US East (N. Virginia)

- Azure Blob Storage: similar pricing with tiering options

- GCP Cloud Storage: comparable rates with regional variations

Delta Lake’s optimization features help control storage costs through file compaction and intelligent data layout.

Databricks Cost Optimization Strategies

Optimization moves beyond theoretical best practices to techniques that actually reduce monthly bills. Here’s what works at scale.

Match Compute Types to Workload Patterns

Use Jobs compute for automated pipelines and scheduled tasks. Reserve All-Purpose clusters exclusively for interactive development and exploration.

Using job clusters with spot instances can reduce VM costs by up to 50% for fault-tolerant workloads, with DBU charges remaining constant. Spot instances provide discounted infrastructure pricing in exchange for potential interruptions.

Implement Aggressive Auto-Termination

Configure auto-termination for All-Purpose clusters at 5-10 minutes of inactivity. Development clusters sitting idle consume DBUs with zero value generation.

Production Jobs clusters should terminate immediately after workload completion. Databricks charges per second—clusters stopped immediately after task execution avoid unnecessary charges.

Optimize Cluster Sizing

Right-size clusters based on workload requirements rather than defaulting to large instances. Start with smaller configurations and scale up only when performance metrics indicate bottlenecks.

Monitor cluster metrics through the billable usage system table. Clusters consistently showing low CPU or memory utilization signal oversizing opportunities.

Enable Photon Acceleration

Photon is a built-in vectorized query engine that accelerates query execution for SQL and DataFrame operations. Faster execution means fewer DBU-hours consumed despite identical DBU rates.

That said, Photon works best for SQL and DataFrame operations. Complex Python UDFs or custom code may see limited acceleration.

Leverage Serverless When Available

Serverless compute DBU rates are typically higher (e.g., $0.35 – $0.40 per DBU) than Jobs compute DBU rates ($0.07 – $0.15 per DBU), though they eliminate infrastructure costs.

Serverless eliminates cluster management overhead and optimizes infrastructure utilization automatically—both reducing operational costs beyond direct DBU savings.

Use Spot Instances for Fault-Tolerant Workloads

AWS Spot Instances and Azure Spot VMs provide infrastructure at 60-90% discounts compared to on-demand pricing. Jobs compute workloads with built-in retry logic can leverage spot instances to reduce infrastructure costs substantially.

DBU charges remain constant—spot instances only discount the infrastructure component. But that infrastructure represents 40-60% of total costs for many workloads.

Monitor Costs Through System Tables

The billable usage system table (system.billing.usage) centralizes consumption data across all workspace regions. According to official documentation, this table updates regularly with DBU consumption, SKU details, and usage metadata.

Sample queries can identify cost drivers:

- Highest DBU-consuming workspaces and clusters

- All-Purpose clusters with excessive idle time

- Workloads running on oversized instances

- Unexpected usage spikes requiring investigation

Monitoring costs operationally—rather than reviewing monthly invoices after the fact—enables proactive optimization.

Databricks Pricing Challenges and Gotchas

Several aspects of Databricks pricing catch teams unprepared. Awareness helps avoid costly surprises.

DBU and Infrastructure Costs Bill Separately

Cloud providers bill infrastructure charges (VMs, storage, networking) while Databricks bills DBU consumption. Teams need to reconcile both to understand total cost of ownership.

According to Databricks’ Cloud Infra Cost Field Solution, companies can join Databricks usage data with cloud infrastructure costs for unified TCO views at the cluster and tag level.

Tier Confusion Between Azure and AWS/GCP

Azure’s Premium tier corresponds to Enterprise tier on AWS and GCP. Documentation sometimes references different tier names for equivalent functionality, creating confusion during cross-cloud comparisons.

Always verify tier feature sets rather than assuming name equivalence.

Hidden Costs in Fine-Grained Access Control

Fine-grained access controls (row filters, column masks, dynamic views) on dedicated compute now leverage serverless compute for data filtering. This requires workspace-level serverless enablement.

On Databricks Runtime 15.4 LTS or above, fine-grained access control enforcement on dedicated compute leverages serverless compute for data filtering—adding serverless charges even when primary workloads run on dedicated clusters.

Automatic Cluster Updates Add Compliance Costs

Enabling automatic cluster updates for security patching automatically adds the Enhanced Security and Compliance add-on charges. This applies to classic compute plane resources but not serverless.

The feature provides value through automated patching, but teams should factor the add-on cost into budgets.

Model Serving GPU Costs Escalate Quickly

GPU serving consumes 10-628 DBUs per hour depending on configuration. A Large 8X 80GB instance (A100 80GB × 8 GPU) running continuously costs 628 DBUs per hour—plus infrastructure charges for the GPU instances themselves.

Using $0.15 per DBU as an example, that would be approximately $94.20 per hour in DBU charges alone, or approximately $68,200 monthly for continuous operation. Add infrastructure costs and the total becomes substantial.

Estimating Monthly Databricks Costs

Accurate cost estimation requires understanding the “3 Vs” of data workloads: Volume, Velocity, and Variety.

Volume: More data means more storage plus more compute to process it. Teams processing petabyte-scale data lakes consume proportionally more DBUs than those working with terabytes.

Velocity: Real-time streaming equals always-on clusters. Batch processing runs clusters periodically, reducing total uptime and associated charges.

Variety: Unstructured data (images, videos, documents) costs more to process than structured SQL tables. Complex transformations consume more compute resources per record.

A practical estimation approach:

- Identify workload types and expected monthly runtime hours

- Select appropriate compute types (Jobs vs All-Purpose vs SQL)

- Choose subscription tier based on governance requirements

- Use the pricing calculator with specific instance types and cluster configurations

- Add 20-30% buffer for development, testing, and unexpected usage

Organizations with existing Spark workloads can benchmark DBU consumption per data volume processed, then extrapolate to expected Databricks usage. Teams migrating from on-premises Hadoop should factor in learning curve time when optimizing Databricks costs.

Frequently Asked Questions

How much does Databricks cost per month?

Monthly costs vary dramatically based on workload volume, compute type, subscription tier, and cloud provider. Small teams running development workloads might spend hundreds monthly, while enterprises processing petabyte-scale data can incur six-figure bills. According to the official website, Databricks offers pay-as-you-go pricing with no up-front costs—actual spend depends on usage. Use the pricing calculator with specific workload parameters for accurate estimates.

What is a DBU and how is it calculated?

A Databricks Unit (DBU) measures normalized compute capacity. DBU consumption depends on instance type specifications (vCPUs, memory) and workload type. For example, an m5.xlarge instance consumes 0.690 DBU per hour for certain compute types. The calculation multiplies DBU consumption by the per-DBU price (which varies by subscription tier and compute type) to determine DBU charges, separate from cloud infrastructure costs.

Is Databricks cheaper on AWS, Azure, or GCP?

DBU rates remain relatively consistent across cloud providers for equivalent tiers and compute types. Infrastructure costs vary based on each provider’s VM pricing and regional availability. Organizations with existing cloud commitments, Reserved Instances, or enterprise agreements can leverage those for infrastructure savings. Generally speaking, teams should choose cloud providers based on existing infrastructure, data locality, and native service integrations rather than marginal pricing differences.

What’s the difference between Standard, Premium, and Enterprise tiers?

Standard provides core Databricks functionality without advanced governance features. Premium adds role-based access control (RBAC), audit logs, enhanced security, and collaboration features—typically costing 30-50% more per DBU. Enterprise delivers maximum governance, Unity Catalog for centralized metadata management, and priority support at the highest DBU rates. On Azure, Premium tier corresponds to Enterprise tier on AWS and GCP.

How can I reduce Databricks costs?

Use Jobs compute instead of All-Purpose for automated workloads (saves 50-70%), enable aggressive auto-termination (5-10 minutes) for development clusters, migrate to serverless compute where available (~50% DBU reduction), leverage spot instances for fault-tolerant workloads (60-90% infrastructure savings), enable Photon acceleration for faster execution, right-size clusters based on actual resource utilization, and monitor costs through the system.billing.usage table to identify optimization opportunities.

Does Databricks charge for storage separately?

Databricks charges for compute (DBUs plus infrastructure) but not storage directly. Data stored in cloud provider storage (S3, Blob Storage, Cloud Storage) incurs standard cloud storage fees billed by AWS, Azure, or GCP—typically around $0.023 per GB monthly for standard tiers. Delta Lake optimization features help control storage costs through file compaction and efficient data layout.

What are the hidden costs in Databricks pricing?

Common hidden costs include All-Purpose cluster idle time before auto-termination, development and testing workload spillover, serverless charges for fine-grained access controls on dedicated compute (Runtime 15.4 LTS+), Enhanced Security and Compliance add-on when enabling automatic cluster updates, and unexpectedly high GPU serving costs for ML model deployments. Organizations should factor 20-30% buffer above calculator estimates for these contingencies.

Conclusion: Making Databricks Pricing Work

Databricks pricing seems complex because it reflects genuine workload diversity—batch ETL, interactive analytics, real-time streaming, and GPU-accelerated ML serving all have different resource profiles and cost structures.

But the framework becomes manageable once the components click: DBU consumption based on compute type and tier, plus infrastructure costs from cloud providers, billed per-second for actual usage.

Cost control comes down to matching compute types to workload patterns, implementing aggressive auto-termination, leveraging serverless where available, and monitoring usage continuously through system tables rather than reacting to monthly invoices.

Start with the official pricing calculator to establish baseline estimates. Run pilot workloads to validate assumptions. Monitor billable usage data to identify optimization opportunities. And remember—the goal isn’t minimizing costs in absolute terms but maximizing value delivered per dollar spent.

Ready to optimize spending? Access the Databricks pricing calculator on the official website, enable the billable usage system table for monitoring, and start benchmarking actual DBU consumption against workload value delivered.